- Blog

- Rocket royale characters

- Download php for mac os x sierra

- Web scraping in nodejs

- Recent menu on message on iphone

- Imovie free download for iphone

- Eugenia frost as dusk falls

- Tagspaces video

- Kotaku the mind meld has-gone-too-fsr

- Set timer for 40 minutes

- Activetcl tutorial

- Free pdf signer online

- New zealand beer with the words rock and gold

- Stronghold 3 cheats that work

- Sleepwatcher scripts to eject timemachine before sleep

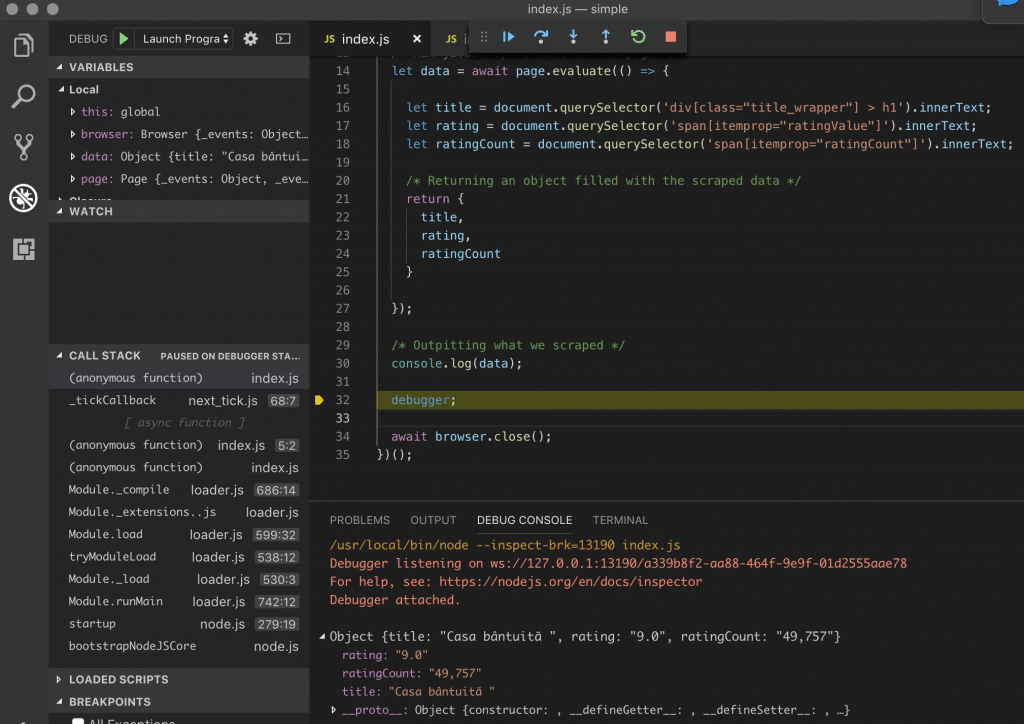

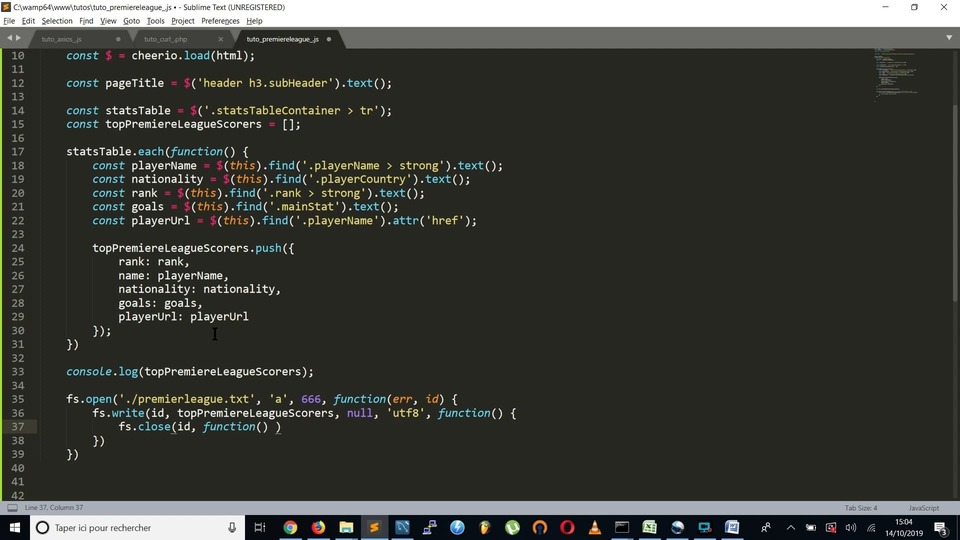

Nightmare is similar to axios or any other request making library but what makes it odd is that it uses Electron under the cover, which is similar to PhantomJS but roughly twice as fast and more modern. Now, require them in our `index.js` file const Nightmare = require('nightmare') const cheerio = require('cheerio')

#Web scraping in nodejs install#

npm init -y npm install nightmare cheerio -unsafe-perm=true And add Nightmare and Cheerio from npm as our dependencies. We create a new folder and run this command inside that folder to create a package.json file. Cheerio makes it easy to select, edit, and view DOM elements.

Fetching data by making an HTTP request.All of these opens a browser instance in which our website can run just like other browsers and hence executes javascript files which render the dynamic content of the website.Īs I explained in my previous post that web scraping is divided into two simple parts. Why these tools and libraries? These tools and libraries are generally used by Software testers in tech industries for software testing. We will use web automation tools and libraries like Selenium, Cypress, Nightmare, Puppeteer, x-ray, Headless browsers like phantomjs etc. So, the actual content that we need to scrape will be rendered within the div#app element through javascript so methods that we used to scrape data in the previous post fails as we need something that can run the javascript files similar to our browsers. For an instance, let’s make a request to any SPA website like I have taken this vue admin template website and disable javascript using Chrome DevTools, this is the response I got - Vue Admin Template Now, websites are more dynamic in nature i.e content is rendered through javascript. We have moved to Single Page Application, you can know more about SPA in this blog post - How Single-Page Applications Work. In previous years, we have seen exponential growth in javascript whether we talk about libraries, plugins or frameworks.

#Web scraping in nodejs how to#

In the previous post, we learned how to scrape static data using Node.js.